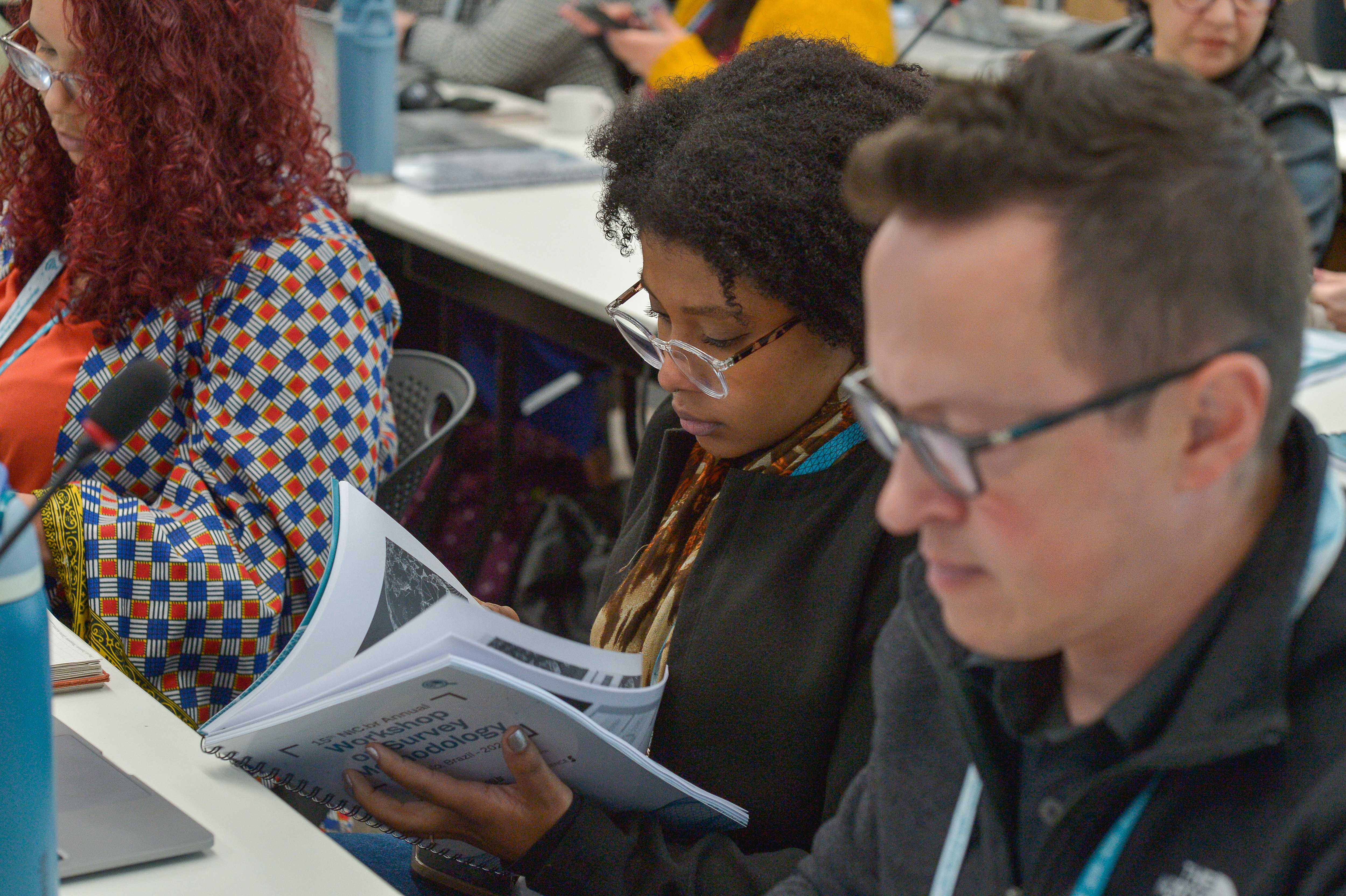

The Regional Center for Studies on the Development of the Information Society (Cetic.br) at the Brazilian Network Information Center (NIC.br), in collaboration with the National School of Statistical Sciences (ENCE) of the Brazilian Institute for Geography and Statistics (IBGE), and the Society for the Development of Scientific Research (SCIENCE), has been conducting the NIC.br Annual Workshop on Survey Methodology since 2011. The 16th Annual Workshop on Survey Methodology will be hosted by NIC.br from August 24 to 27, 2026 in São Paulo, Brazil.

The workshop has become a regional and international reference for advancing methodological innovation in survey practice and official statistics, with a particular emphasis on information and communication technology (ICT) statistics and related domains of the digital economy and artificial intelligence.

The event brings together professionals from national statistical offices and government agencies, academic and research institutions, international organizations, civil society and the private sector to share knowledge and foster new perspectives on data production, analysis, and dissemination. As a cornerstone event in the field, the workshop addresses emerging topics in survey methodology and explores innovative approaches to improving data quality and availability. Its program combines keynote presentations, panel discussions, interactive short courses, and case studies, supporting both strategic dialogue and hands-on capacity building.The 2026 edition, under the umbrella title “Innovative Methods for Better Data”, will build on the workshop’s legacy by focusing on methodological innovation to strengthen data quality in an increasingly complex digital ecosystem. The program will cover key topics such as AI and data science in official statistics, AI and machine learning applications across the survey lifecycle, quality management in surveys, measuring the digital economy (including e-commerce and digital trade), and the challenges of measuring and producing statistics on cybersecurity.

16th EDITION: INNOVATIVE METHODS FOR BETTER DATA: STRENGTHENING DATA QUALITY IN AN INCREASINGLY COMPLEX DATA ECOSYSTEM

The 2026 edition takes place in a rapidly evolving data environment in which traditional surveys increasingly coexist with administrative data, geospatial data, digital platform data, and other big data sources. These developments can expand analytical possibilities and improve timeliness and granularity, but they also raise challenges for statistical quality, methodological robustness, interoperability, privacy protection, and transparency. Addressing these issues is essential to produce relevant evidence for policymakers and other stakeholders working on digital inclusion, digital inequalities, and the measurement of challenges posed by AI and cybersecurity.

The 16th edition will focus on how to advance data quality by integrating statistical standards with emerging analytical methods, including machine learning and AI-enabled tools. The workshop will explore, among others, the following topics:

- AI and machine learning across the survey lifecycle (design, data collection, processing, imputation, weighting, and estimation), including implications for bias, explainability, and reproducibility.

- Data quality and Total Data Quality in multi-source environments, including quality dimensions, quality management, metadata, and interoperability between survey and non-survey sources.

- Measuring the digital economy, including e-commerce and digital trade, and the diffusion of digital technologies and their socioeconomic impacts.

- Measurement of information integrity and the digital information environment, including methodological approaches and governance considerations.

- Measuring digital infrastructure, with a focus on data centers and related sustainability dimensions in the context of digital transformation.

- Challenges of measuring and producing official statistics on cybersecurity and digital resilience, addressing practices and risks.

By exploring these themes, the workshop aims to strengthen the technical and policy-relevant dialogue on how public data producers can responsibly and effectively integrate emerging data sources and AI-enabled methods into established statistical standards and survey methodologies.